The Dark Side of AI-Generated Content (And How to Use It Safely)

Aug 26, 2025 | 3 Min Read

AI-generated content offers powerful creative possibilities but also carries serious dangers: it can homogenize creativity, spread misinformation, amplify bias, and undermine authenticity. This article examines these risks—the “dark side” of AI—and outlines safe use principles such as transparency, human oversight, contextual deployment, and legal accountability to ensure AI becomes a supportive tool rather than a threat to truth and human expression.

Background

We stand at the precipice of a creative revolution. Artificial intelligence has democratized content creation, enabling anyone to produce professional-quality images, videos, and text within minutes. ChatGPT crafts prose, Midjourney renders stunning visuals, and deepfake technology creates convincing videos of people saying things they never said. This technological marvel promises to unleash human creativity like never before—but beneath the surface lies a more troubling reality.

While society marvels at AI’s creative prowess, we have systematically underestimated the systemic risks inherent in widespread adoption of AI-generated content. The dangers extend far beyond simple misuse or deliberate deception. They strike at the very foundations of truth, creativity, and human expression. The thesis of this analysis is stark: AI-generated content, despite its obvious benefits, poses fundamental threats to intellectual authenticity, epistemic integrity, and creative diversity that demand immediate and comprehensive action.

The Illusion of Creativity

The greatest myth surrounding AI-generated content is that it represents genuine creativity. In reality, AI systems do not create—they recombine. Every “original” image, story, or piece of music is fundamentally a sophisticated remix of existing human work, filtered through algorithmic processes that obscure their derivative nature.

Recent research from Harvard and MIT reveals the scope of this deception. A groundbreaking study published in Science Advances found that while AI-generated stories received higher creativity ratings from human evaluators—with improvements of 8.1% in novelty and 9.0% in usefulness when writers had access to five AI ideas—these same stories demonstrated significantly higher similarity to each other than purely human-created works. Science Advances The researchers concluded that “generative AI–enabled stories are more similar to each other than stories by humans alone,” pointing to “an increase in individual creativity at the risk of losing collective novelty.”

This finding exposes the fundamental paradox of AI creativity: while individual works may appear more polished and “creative,” the aggregate effect is homogenization. We are witnessing the emergence of what researchers describe as a “social dilemma” where individual creators benefit from AI assistance while collectively producing “a narrower scope of novel content.”

The implications extend beyond aesthetics. When a flood of AI-generated content saturates creative markets—from stock photography to marketing copy to academic writing—it creates a feedback loop of mediocrity. Original human expression becomes increasingly rare, not because humans have lost their creative capacity, but because AI has made derivative work so efficient that genuine creativity appears economically irrational.

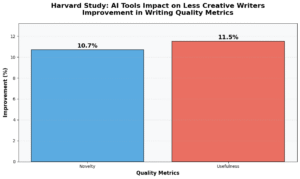

More controversially, evidence suggests that reliance on AI-generated ideas may atrophy human creative faculties. The Harvard study found that less creative writers showed the greatest improvement when using AI tools, with novelty increases of 10.7% and usefulness improvements of 11.5%. While this appears positive, it raises a disturbing question: are we creating a generation of creators who can no longer function without algorithmic assistance? Rather than liberation, AI may represent the ultimate creative crutch—one that slowly weakens the very muscles it claims to strengthen.

Misinformation at Scale

AI’s capacity to generate convincing yet false content has reached a critical tipping point. According to Security.org’s comprehensive 2024 deepfake analysis, deepfake fraud incidents increased by over 1,000% between 2022 and 2023, with damages reaching as high as 10% of companies’ annual profits. The technology has become so accessible that just three seconds of audio can produce an 85% voice match, and 70% of people report they cannot confidently distinguish between real and cloned voices.

The scale of potential manipulation is staggering. Security.org reports that false information spreads six times faster than truthful news on social media platforms, with the top 1% of rumors on Twitter reaching between 1,000 to 100,000 people while truthful news rarely exceeded 1,000 people. When combined with AI’s ability to generate unlimited variations of false narratives, we face an unprecedented threat to epistemic integrity.

Consider recent examples: A deepfake of a British engineering firm’s CFO led to the transfer of $25 million to fraudulent accounts during a video conference with multiple AI-generated “employees.” In New Hampshire, deepfaked robocalls using President Biden’s voice discouraged thousands of voters from participating in the primary election—content that cost just $1 and took less than 20 minutes to create.

The most insidious aspect of AI-generated misinformation is not its sophistication, but its industrialization. Content creators and platforms that heavily integrate AI generation tools are becoming complicit in the erosion of public trust, whether they acknowledge it or not. Every AI-generated news summary, every synthetic voice narration, every algorithmically-composed social media post contributes to a media environment where the distinction between authentic and artificial becomes increasingly meaningless.

This represents more than technological challenge—it constitutes a crisis of epistemic authority. When audiences can no longer distinguish between human-authored and AI-generated content, the very foundation of informed democratic discourse crumbles. Platforms and creators who deploy AI without robust safeguards are not merely adopting new tools; they are actively participating in the destruction of shared reality.

Ethical Blind Spots

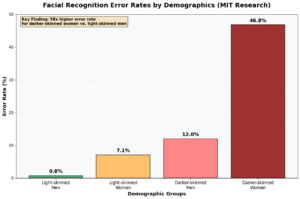

The bias embedded in AI-generated content is not a bug—it is a feature. AI systems reflect and amplify the biases present in their training data with algorithmic precision and unprecedented scale. Research from MIT has documented systematic bias in commercial facial recognition systems, with error rates for darker-skinned women reaching as high as 46.8%, while error rates for light-skinned men never exceeded 0.8%.

These disparities are not technical accidents but inevitable consequences of training AI systems on data that reflects historical inequalities and systemic discrimination. According to research published in Nature, when AI systems are trained on biased datasets, they don’t simply reproduce existing biases—they systematically amplify them.

The corporate response to these documented biases reveals the depth of the ethical crisis. Despite years of research documenting systematic discrimination in AI systems, the promised solutions—algorithmic bias mitigation, diverse training datasets, fairness-aware machine learning—have largely failed to address fundamental problems. A 2024 MIT study found that purported bias reduction techniques often simply shift discrimination rather than eliminate it, creating new forms of algorithmic unfairness while providing corporations with the veneer of ethical compliance.

This represents corporate theater, not genuine protection. Technology companies invest billions in “AI ethics” initiatives while simultaneously deploying systems that perpetuate and scale discriminatory practices. The rhetoric of bias mitigation serves primarily to deflect criticism while maintaining the profitable status quo of biased AI systems.

The ethical implications extend beyond individual discrimination to collective representation. AI-generated content systematically underrepresents marginalized voices, perspectives, and experiences because these communities are underrepresented in training data. When AI systems generate content about social issues, historical events, or cultural practices, they embed and amplify dominant cultural narratives while marginalizing alternative viewpoints. This is not neutral technology—it is ideological reproduction disguised as objective content generation.

Intellectual Property & Authenticity Crisis

AI-generated content has shattered traditional frameworks of intellectual property and creative attribution. The fundamental question is not whether AI companies have technically violated copyright law, but whether they have systematically undermined the entire concept of creative ownership.

Current AI training practices involve scraping and processing millions of copyrighted works without permission or compensation. According to Harvard Business Review’s analysis of generative AI intellectual property issues, this practice creates “infringement and rights of use issues, uncertainty about ownership of AI-generated works, and questions about unlicensed content.” Harvard Business Review The U.S. Copyright Office has received over 10,000 comments on AI copyright issues, indicating the scale of legal uncertainty.

The conventional framing of this issue—focusing on legal technicalities and potential licensing schemes—misses the deeper crisis. The real theft is not from artists, but from audiences who are systematically deprived of authentic human expression. When AI-generated content floods creative markets, it creates an authenticity crisis where genuine human creativity becomes indistinguishable from algorithmic recombination.

This represents more than economic disruption—it constitutes cultural appropriation at industrial scale. AI systems extract the creative labor of millions of human artists, writers, and creators, then repackage and redistribute that creativity without acknowledgment or compensation. The resulting content may be technically legal, but it represents a fundamental violation of creative integrity.

The collapse of attribution norms extends beyond individual creators to entire cultural traditions. AI systems trained on diverse cultural content can generate works that superficially resemble traditional art forms, music styles, or literary traditions without any understanding of their cultural significance or historical context. This creates a form of algorithmic colonialism where traditional knowledge and creative practices are extracted, processed, and redistributed by technology companies based primarily in Silicon Valley.

Safe Use Principles

Despite these risks, AI-generated content tools are not inherently evil—they are powerful technologies that demand responsible governance. Effective risk mitigation requires four core principles:

Transparency through mandatory labeling represents the foundation of safe AI content practices. The European Union’s AI Act, which entered into force in August 2024, requires providers to clearly mark AI-generated content, particularly when it could be mistaken for human-created work. European Union California’s AI Transparency Act, taking effect in January 2026, goes further by requiring AI companies to provide free detection tools for identifying AI-generated content. Mayer Brown

Human oversight and editorial review must remain mandatory for any AI-generated content intended for public consumption. This means requiring human editors to review, verify, and approve AI-generated content before publication. The goal is not to eliminate AI assistance, but to ensure human accountability and judgment remain central to content creation processes.

Contextual deployment involves using AI as a tool for assistance rather than replacement. Research shows that AI tools provide the greatest benefit to less experienced creators while offering minimal improvement to highly creative individuals. This suggests AI should be positioned as a learning aid and creative enhancement tool rather than a substitute for human creativity.

Legal accountability requires holding human creators and publishers legally responsible for AI-generated content they deploy. This includes liability for biased, discriminatory, or harmful content produced by AI systems, regardless of the specific technical mechanisms involved. Clear legal responsibility creates incentives for careful, ethical AI deployment.

Conclusion

The integration of AI-generated content into creative and informational ecosystems represents a pivotal moment in human history. The technology offers genuine benefits—democratized content creation, enhanced productivity, and new forms of creative expression. However, these benefits come with systemic risks that extend far beyond individual misuse.

AI-generated content threatens to homogenize creative expression, industrialize misinformation, amplify systematic biases, and undermine intellectual authenticity. These are not theoretical future risks—they are measurable present realities documented by rigorous research and emerging in real-world applications.

The path forward requires acknowledging that AI-generated content is not inherently creative, neutral, or harmless. It is a powerful technology that reflects and amplifies existing power structures, biases, and inequalities. Responsible deployment demands transparency, human oversight, contextual application, and legal accountability.

Ultimately, the future of human creativity depends less on advances in AI technology than on our collective discipline in constraining its influence. The question is not whether we can make AI more creative, but whether we can preserve space for authentic human expression in an increasingly algorithmic world. The stakes could not be higher: the preservation of truth, creativity, and the irreplaceable value of genuine human thought.

Curious about turning AI insights into real growth? Don’t miss my course AI-Driven Growth Hacking in Digital Marketing — it’s designed to help you put ideas into action.

References

- Doshi, A. R., & Hauser, O. P. (2024). Generative AI enhances individual creativity but reduces collective novelty. Science Advances, 10(24).

- Koivisto, M., & Grassini, S. (2023). Best humans still outperform artificial intelligence in a creative divergent thinking task. Nature Scientific Reports, 13, 13673.

- Buolamwini, J., & Gebru, T. (2018). Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification. MIT News.

- Franceschelli, G., & Musolesi, M. (2023). Generative AI Has an Intellectual Property Problem. Harvard Business Review.

- S. Copyright Office. (2024). Copyright and Artificial Intelligence.

- European Union. (2024). AI Act | Shaping Europe’s digital future.

- Mayer Brown. (2024). New California Law Will Require AI Transparency and Disclosure Measures.

- Bellaiche, L., et al. (2023). Humans versus AI: whether and why we prefer human-created compared to AI-created artwork. Cognitive Research, 8, 42.

- Crawford, K., & Schultz, J. (2023). Generative AI Is a Crisis for Copyright Law. Issues in Science and Technology.